IoT to Edge Computing is reshaping how devices communicate, analyze data, and respond in real time. From smart homes to industrial IoT facilities, the approach brings processing closer to the data source. This shift helps reduce latency, save bandwidth, and improve privacy, aligning with IoT basics and practical edge strategies. As you explore topics like edge computing explained and cloud vs edge computing, you’ll see how data can be filtered locally and streamed selectively. Ultimately, embracing edge-enabled architectures with edge AI capabilities unlocks faster decisions and more reliable systems across sectors.

Put simply, the concept can be described as moving compute duties from the core cloud to the network edge and nearby devices. Think of it as on-device processing and local analytics that empower sensors, gateways, and miniature data centers to act immediately. LSI-friendly framing uses related terms such as local inference, near-source computing, and boundary processing to explain the shift while keeping the core goals in view. For industrial IoT contexts, phrases around real-time decisions and safety emphasize the practical benefits of this distributed approach.

IoT to Edge Computing: A Practical Introduction for Beginners

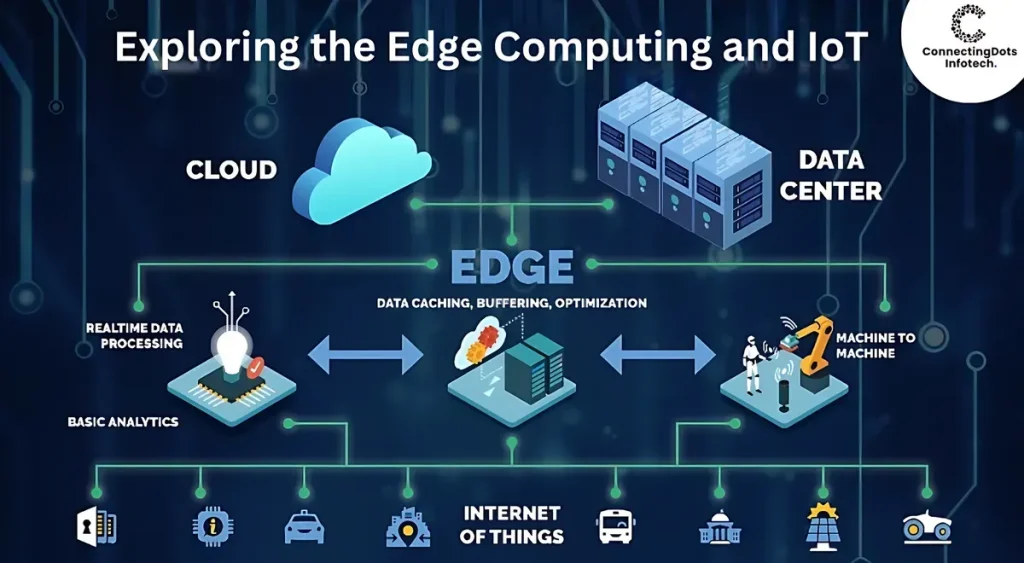

IoT to Edge Computing forms a bridge between the broad world of connected devices and the local intelligence that powers fast, autonomous actions. At its core, it combines IoT basics—sensors, actuators, gateways, and data streams—with edge computing to bring processing closer to where data is produced. This proximity reduces the time from sensor event to decision, enabling real-time responses and smoother operation of factories, buildings, and consumer systems.

By moving analytics and lightweight inference to edge devices, you can cut bandwidth usage, improve privacy by keeping sensitive data on site, and support offline or intermittently connected environments. This hybrid approach aligns with concepts from cloud vs edge computing, where critical insights are generated locally while broader analyses may still run in the cloud when connectivity permits. In practice, an IoT to Edge Computing setup leverages devices, gateways, and edge AI models to deliver faster, more reliable outcomes.

Understanding IoT basics: Devices, Sensors, and Data Flows

IoT basics involve billions of devices capable of sensing, communicating, and sometimes acting on the world around them. In a practical sense, an IoT system has three layers: the devices and sensors that collect data, the connectivity that moves it, and the applications that turn raw signals into insights. Recognizing these layers helps organizations design interoperable, scalable solutions aligned with industrial IoT and enterprise needs.

Raw sensor data can reveal status, trends, or anomalies that matter for efficiency, safety, or user experience. Understanding IoT basics helps teams map data streams to business outcomes, choose appropriate communication protocols, and plan for security, governance, and lifecycle management across devices and gateways.

Edge Computing Explained: Speed, Autonomy, and Local Intelligence

Edge computing explained highlights how processing near the source reduces latency and enables faster decision cycles. By running analytics and even simple machine learning on edge devices or gateways, organizations can respond within milliseconds, which matters in manufacturing lines, smart buildings, and autonomous systems.

Beyond speed, edge computing saves bandwidth by transmitting only relevant summaries or alerts to the cloud. It also enhances privacy by keeping sensitive data closer to its origin and reduces exposure to centralized data stores. Together with edge AI, the local intelligence can support autonomous devices that operate with minimal cloud dependency.

Cloud vs Edge Computing: When to Centralize and When to Decentralize

The cloud vs edge computing comparison centers on latency, reliability, and data volumes. Cloud computing offers vast scalability, centralized management, and rich analytics, but can introduce delays and require continuous connectivity for full capability. In contrast, edge computing delivers fast responses and resilience in environments with limited or intermittent connectivity.

A balanced approach blends both worlds: lightweight, time-sensitive analytics at the edge complemented by deeper, historical analyses in the cloud. This hybrid pattern aligns with modern industrial IoT strategies, enabling factories and smart grids to optimize operations while preserving bandwidth and compliance. Planning should consider data governance, privacy requirements, and total cost of ownership to determine the right distribution of processing tasks.

Edge AI and Industrial IoT: Driving Real-Time Intelligence on the Edge

Edge AI enables machine learning models to run locally on edge devices, gateways, or micro data centers, turning sensor data into actionable insights without round-trips to the cloud. This capability is especially valuable in industrial IoT, where real-time fault detection, predictive maintenance, and quality inspection can be performed on-site with minimal latency.

Industrial IoT benefits from on-device inference, model updates over secure channels, and ongoing optimization of edge AI workloads. As models become lighter and more efficient, manufacturing floors, energy networks, and logistics hubs gain autonomous decision-making, improved uptime, and reduced bandwidth needs through localized processing.

Roadmap for Deploying an Edge-Enabled IoT System: Security, Architecture, and Scale

Getting started with an edge-enabled IoT system requires a clear roadmap: define objectives, identify data sources, and determine which processing should occur at the edge versus in the cloud. Start with a small pilot, choose appropriate hardware like gateways or compact edge servers, and design a data pipeline that respects privacy, governance, and security.

Key practices include securing devices with robust authentication, encrypting data channels, and implementing lifecycle management for software updates. Planning for scalability means implementing monitoring, orchestration, and interoperability via standard APIs to accommodate new devices and workloads across multiple sites. A thoughtful architecture enables reliable operation, governance, and long-term value from IoT to Edge Computing deployments.

Frequently Asked Questions

What is IoT to Edge Computing, and how does it relate to IoT basics?

IoT to Edge Computing refers to processing data near its source rather than sending everything to a distant cloud. This builds on IoT basics—the network of sensors, devices, and data streams—and is clarified by edge computing explained, which highlights benefits like lower latency, reduced bandwidth, and improved privacy. In short, IoT to Edge Computing enables faster decisions and more reliable operations for connected systems.

How does cloud vs edge computing affect IoT to Edge Computing deployments?

Cloud vs edge computing describes where data is processed in an IoT to Edge Computing setup. Edge processing delivers real-time responses and resilience when connectivity is limited, while the cloud provides scalable analytics and long-term storage. Most deployments blend both, running time-sensitive tasks at the edge and moving richer analytics to the cloud.

What is edge AI in the context of IoT to Edge Computing?

Edge AI runs machine learning models directly on edge devices or gateways, enabling instant in-situ inference. This aligns with IoT to Edge Computing by reducing cloud calls, saving bandwidth, and supporting offline operation. As models become smaller and hardware more capable, edge AI is practical for both industrial and consumer applications.

How can industrial IoT benefit from IoT to Edge Computing?

Industrial IoT can leverage IoT to Edge Computing for on-site data processing, enabling real-time monitoring, predictive maintenance, and immediate safety alerts. Edge computing reduces downtime and maintenance costs while preserving data sovereignty and privacy by keeping sensitive information local when needed. This leads to safer, more efficient and resilient industrial operations.

What are the key components of an IoT to Edge Computing architecture?

Core components include IoT devices and sensors, gateways or edge servers for local processing, edge analytics software and edge AI models for near-source insights, and the cloud for long-term storage and orchestration. Security is a cross-cutting concern across all layers, supported by robust device authentication, secure data channels, and reliable update mechanisms.

What are best practices and common challenges for IoT to Edge Computing implementations?

Common challenges include securing many distributed devices, achieving interoperability across vendors, and maintaining data governance. Best practices involve adopting standard protocols and open APIs, implementing secure firmware updates, and designing for scalable edge workloads with centralized monitoring and automated updates. Plan for connectivity variability, performance testing, and governance to ensure sustainable, secure deployments.

| Aspect | Key Points |

|---|---|

| IoT Basics | IoT includes billions of devices that sense, communicate, and act; three-layer architecture (devices/sensors, connectivity, analytics); data reveals status, trends, and anomalies for efficiency, safety, and user experience. |

| What is Edge Computing | Processing data near its source to reduce latency, enable faster responses, and save bandwidth; improves privacy by keeping data local. |

| Why IoT and Edge Belong Together | Local processing enables real-time decisions; hybrid models offload heavy tasks to edge servers; reduces cloud costs and supports offline scenarios. |

| Common Patterns | Shift from cloud-centric to edge-enabled, with essential decisions at the edge and longer-term analytics in the cloud; example: smart factory anomaly detection at edge; cloud data lakes for aggregation. |

| Key Components | IoT devices; gateways/edge servers; edge analytics/edge AI; cloud for storage and orchestration; security across all layers. |

| Practical Use Cases | Manufacturing (predictive maintenance), energy/utilities (grid optimization), smart cities (traffic, safety), healthcare (real-time alerts); edge AI enables autonomous responses. |

| Cloud vs Edge Tradeoffs | Cloud: scalable, centralized analytics but higher latency and connectivity dependency. Edge: fast, resilient, bandwidth savings. Often a balanced hybrid approach. |

| Roadmap to Getting Started | Define objective, identify data sources, choose edge hardware, implement secure identity, pilot small project, iterate and scale. |

| Edge AI and Advancements | Run ML models at the edge for classification, anomaly detection, and predictions; enables operation in remote or low-connectivity environments. |

| Challenges and Best Practices | Security across distributed devices, interoperability via standards, data governance, scalability, monitoring and updates. |